“What’s better: Claude or ChatGPT?” is the mind-boggling question every marketer is asking right now. As AI tools become essential to content workflows, understanding the differences between Claude and ChatGPT for marketing can mean the difference between a streamlined operation and a frustrating bottleneck.

In my opinion, both tools have legitimate strengths. ChatGPT – which you can train on your specific needs – excels at rapid ideation, email copy, and social content. However, Claude shines at long-form editing, brand voice consistency, and handling large context windows. The question isn’t really “is Claude better than ChatGPT?” It’s about which LLM you should use for each specific task.

In this guide, I’ll break down everything you need to know, including:

- Claude AI versus ChatGPT for writing

- ChatGPT versus Claude for email

- Claude versus ChatGPT pricing

- Claude versus ChatGPT integrations with your existing stack

Plus, my (very smart) colleagues have tested writing blog posts with ChatGPT, explored ChatGPT for SEO, evaluated ChatGPT alternatives, including Claude, and even used both for AI-powered spreadsheet tasks. Now I’m putting in my two cents, sharing what I’ve learned so you can make confident decisions about ChatGPT versus Claude for coding, content creation, and everything in between.

Let’s get into the good stuff.

Table of contents:

Claude vs. ChatGPT: Which is better?

Here’s my hot take: I think Claude is the better LLM … and I’m not afraid to say it.

Don’t get me wrong. ChatGPT has its strengths, and I’ve used it plenty for quick drafts. But when it comes to the work that actually matters (the stuff that builds trust, drives conversions, and represents your brand), Claude consistently delivers superior results.

Here are two big reasons why I lean toward Claude as a content marketer:

- Writing quality: Claude versus ChatGPT for writing isn’t even close in my experience. Claude produces prose that sounds human, maintains tone across long documents, and requires fewer revision cycles before content is publish-ready.

- Context retention: Claude’s 200K-token context window lets me upload brand guidelines, source documents, and drafts simultaneously without the AI “forgetting” my instructions halfway through.

But, here’s the bottom line: Claude versus ChatGPT for marketing comes down to what you value most. If you prioritize speed and volume, ChatGPT delivers. If you prioritize quality and brand consistency, Claude wins.

That’s my opinion, and after months of using both tools daily, I’m sticking with it.

Which is better for common marketing workflows, Claude or ChatGPT?

You may not love what I’ll say next, but it’s the truth: The answer depends on the task.

In my opinion, Claude is good for long-form content editing and large context handling, making it ideal for:

- Blog posts

- Whitepapers

- Document review

However, that’s not to say that ChatGPT doesn’t have its perks. Personally, I think ChatGPT is best for:

- Rapid ideation

- Email copy

- Social content

Overall, most marketing teams achieve best results by using Claude for editing and ChatGPT for drafting, treating them as complementary tools rather than competitors.

But if you really want a comprehensive comparison of each tool based on common marketing workflows, here’s a table that does just that:

|

Marketing Workflow |

Claude |

ChatGPT |

Winner |

|

Content writing |

Produces nuanced, on-brand long-form copy; handles 200K-token context windows for large documents |

Generates quick first drafts; supports image generation via DALL·E |

Claude for depth, ChatGPT for speed |

|

Email marketing |

Strong at personalization logic and A/B variant writing; consistent tone across sequences |

Faster turnaround on high-volume email copy; built-in templates |

Tie! (ChatGPT vs Claude for email depends on volume versus nuance) |

|

Social media |

Maintains brand voice across platforms; better at longer LinkedIn posts |

Excels at short-form hooks and rapid iteration; creates images natively |

ChatGPT for volume, but Claude for voice consistency |

|

SEO briefs |

Synthesizes large competitor datasets; outputs structured briefs with semantic relationships |

Quick keyword clustering and outline generation |

Claude for research-heavy briefs, ChatGPT for speed |

|

Research reliability |

Provides citations with web search; conservative about unverified claims |

Browses the web in real-time; occasionally hallucinates sources |

Claude for accuracy, ChatGPT for breadth |

|

Long-form content |

200K-token context handles full ebooks and reports; strong structural editing |

128K-token context; better at iterative section-by-section drafting |

Claude |

|

Coding and automation |

Reliable for marketing scripts, API integrations, and data parsing; fewer logic errors |

Faster code generation; broader plugin ecosystem for no-code users |

ChatGPT for speed, but Claude for accuracy |

|

Integrations |

Native Claude connector with HubSpot; API access for custom workflows; Zapier and Make support |

1,000+ plugins; GPT store for pre-built marketing tools; direct Zapier triggers |

ChatGPT for plug-and-play; Claude for HubSpot-native workflows |

|

Governance and privacy |

Enterprise tier includes data retention controls, SSO, and audit logs; no training on user data by default |

Team and Enterprise plans offer data controls; both require opt-out for training exclusion |

Claude |

So, what does this mean for your AI-assisted workflows?

When evaluating Claude AI versus ChatGPT for writing, consider your content type. I suggest using ChatGPT for high-velocity tasks where speed matters most, including:

- Social captions

- Email subject lines

- Quick drafts

Alternatively, I propose using Claude for:

- Long-form editing

- Brand-sensitive content

- Research synthesis (where accuracy and context retention are critical)

Claude vs. ChatGPT for marketing content and on‑brand editing

In my experience as an in-house writer for a big-name SaaS brand, marketing teams truly achieve the best results by using Claude for editing and ChatGPT for drafting.

As I’ve already mentioned, this division leverages each tool’s core strengths. Claude excels at long-form content editing and handling complex contexts, while ChatGPT is best for rapid ideation, email copy, and social content.

But, here’s the key takeaway: understanding when to deploy each tool transforms AI from a novelty into a production-grade content engine.

To put my previous statement into practice, in the next section, I’ll talk through how to use Claude for content and editing.

When to use Claude for content and editing

If you’re wondering about when to actually use Claude AI instead of ChatGPT for writing, I’m here to break it down for you in layman’s terms.

Here’s why I think Claude is the right option in these scenarios:

- Long-form editing and revision: Claude’s 200K-token context window holds entire style guides, brand documentation, and draft content simultaneously. (For example, try uploading your 50-page brand book alongside a blog draft; Claude will apply voice rules without losing context mid-edit.)

- Structural reorganization: Claude identifies logical gaps, redundant sections, and flow issues across documents up to 150,000 words. It also rewrites transitions and restructures arguments while preserving the original meaning.

- Tone-true refinement: Claude maintains a consistent voice across extended pieces. It catches subtle shifts (from conversational to corporate, from active to passive) that erode brand identity.

- Compliance-sensitive content: Claude offers stronger privacy and governance controls for enterprise teams. Content requiring legal review, HR approval, or regulatory compliance benefits from Claude’s audit-friendly outputs and data handling policies.

When to use ChatGPT for content creation

Now, here on the HubSpot Blog, you’re always welcome to have your own opinion, especially regarding AI usage. However, I’m a strong advocate of ChatGPT for content creation.

Here’s why I think it’s the stronger choice for speed and versatility:

- Rapid first drafts: ChatGPT generates usable copy faster for high-volume needs, such as product descriptions, ad variants, and landing page sections.

- Format experimentation: Need the same message as a LinkedIn post, email subject line, Instagram caption, and Google ad? ChatGPT iterates across formats quickly.

- Visual content pairing: DALL·E integration lets ChatGPT generate accompanying images, infographics concepts, and social graphics alongside copy.

- Template-based content: ChatGPT’s custom GPTs and pre-built prompts accelerate repetitive tasks, such as weekly newsletters or social calendars.

Brand voice control: step-by-step setup

I may have a strong perspective on AI tool selection, but I won’t tell you that one tool is better without showing you why. Below, I’ve created two step-by-step guides for brand voice control, for both Claude and ChatGPT.

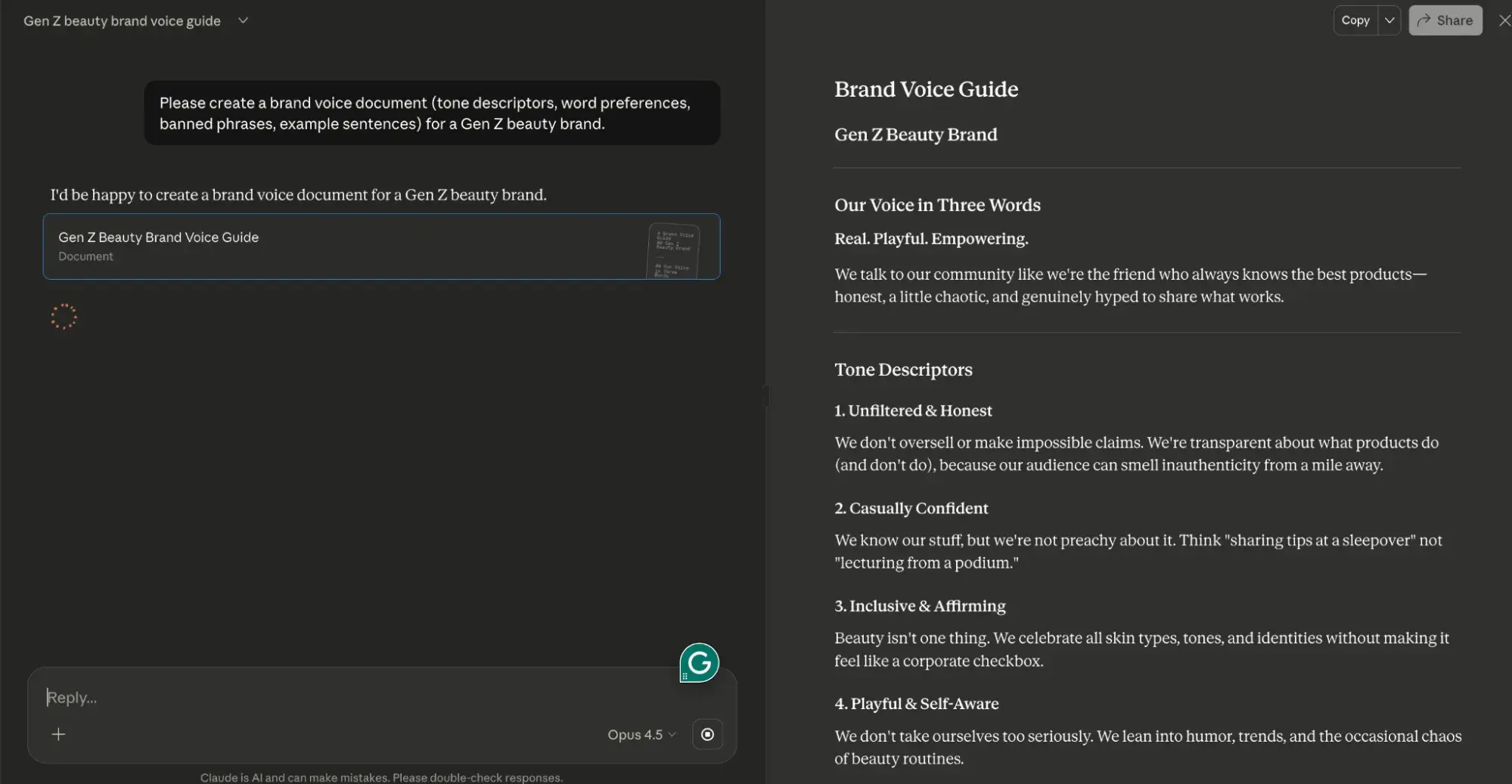

For Claude:

- Create a brand voice document (tone descriptors, word preferences, banned phrases, example sentences).

- Upload the document at the start of each project session (Claude’s Projects feature retains it across conversations.)

- Paste draft content and prompt: “Edit this to match our brand voice document exactly. Flag any sections where the original tone conflicts with guidelines.”

- Review Claude’s tracked changes and rationale before accepting edits.

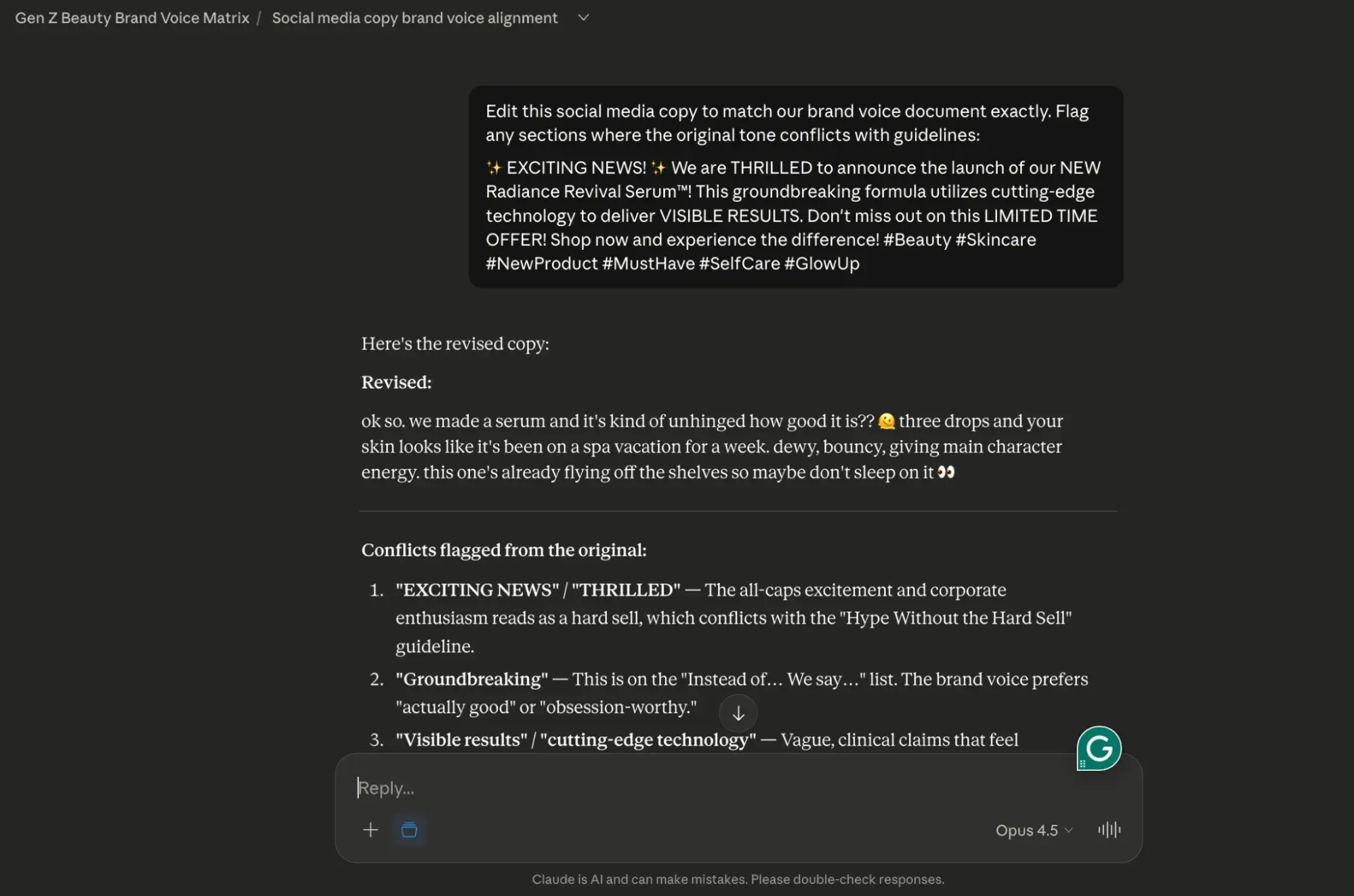

To ensure that this works for you, I’ve tested it out myself. Take a look:

First, I used Claude to create a faux brand voice guide for a Gen Z beauty brand, using the parameters I described above.

Next, I took that Claude-generated brand voice guide for my faux Gen Z beauty brand and dropped it into a Claude Project.

Then, I used the prompt (in step 3) above to edit some potential social media copy.

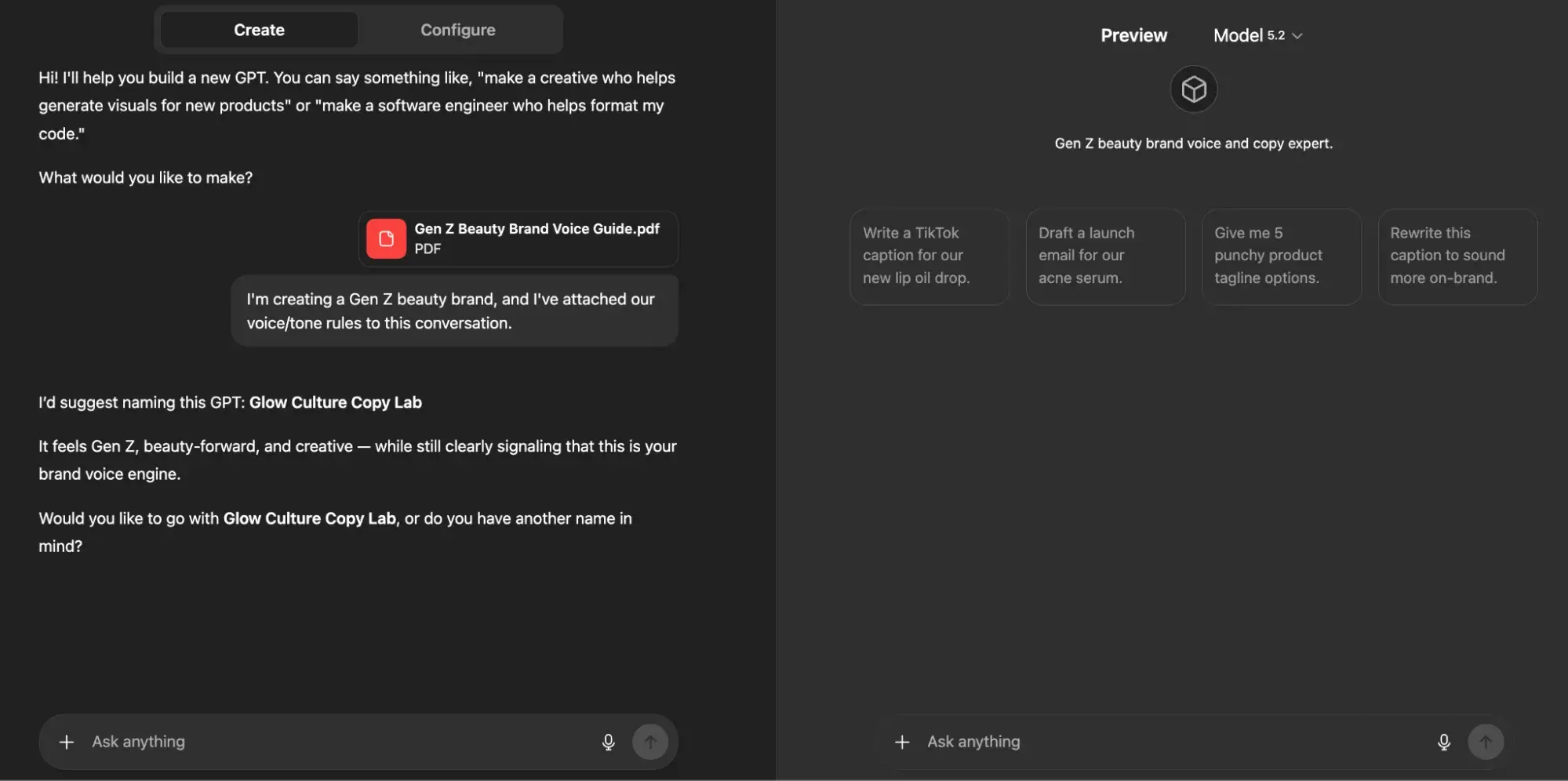

For ChatGPT:

- Build a custom GPT with your brand voice rules embedded in the system prompt.

- Include 3 to 5 example paragraphs showing ideal tone.

- Use the custom GPT for all drafting tasks to ensure baseline consistency.

- Export drafts to Claude for final tone-matching against your full brand documentation.

Again, I wanted to be sure this framework worked for you, so I’ve tested it. Here’s how it went:

First, I gave ChatGPT the same brand voice guide that I fed to Claude.

Then, as I outlined above, I provided my custom GPT with three examples of how I’d like the tone and voice of my Gen Z beauty brand to be executed via social media.

From this point forward, if I were actually building this brand (which I’ve now named “Skin Agenda” – thanks ChatGPT!), I would continue to use this custom GPT as a space to ideate and iterate on ideas for it.

Approval flow integration: Claude and ChatGPT in HubSpot

Want to use both tools in a single content pipeline? Well, you’re in luck. HubSpot’s smart CRM enables seamless integration of Claude and ChatGPT into marketing workflows through these approval pathways:

- Draft stage: ChatGPT generates initial content via API or Zapier trigger.

- Edit stage: Claude refines drafts using the native Claude connector with HubSpot, applying brand voice and structural improvements.

- Review stage: Content routes to HubSpot’s Content Hub for team review, version control, and approval tracking.

- Publish stage: Approved content deploys directly from Content Hub to blogs, landing pages, or email campaigns.

This CMS-approved workflow answers the question “Is Claude better than ChatGPT?” with nuance: Claude is better for editing, governance, and context-heavy tasks, while ChatGPT leads for speed and format variety.

The “Claude-versus-ChatGPT-for-marketing” argument isn’t about choosing one; it’s about sequencing both for maximum output quality and efficiency.

Claude vs. ChatGPT for email and social copy

As I already mentioned, ChatGPT is best for rapid ideation, email copy, and social content; Claude is better suited for long-form content editing and handling large amounts of context.

So, the question of whether ChatGPT versus Claude is better for email depends on whether you prioritize speed or nuance.

In the following section, I’ll break down how each tool performs across key email and social tasks.

Subject line and preview text generation

In my opinion, below are ChatGPT’s strengths when it comes to subject line and preview text generation:

- Generates 20+ subject line variants in seconds with character count constraints

- Tests emotional angles (urgency, curiosity, benefit-led, question-based) simultaneously

- Pairs subject lines with matching preview text that extends the hook without redundancy

Comparatively, here are Claude’s strengths:

- Analyzes your existing high-performing subject lines to identify patterns before generating new options

- Maintains brand voice consistency across subject line batches

- Flags compliance issues (misleading claims, spam trigger words) during generation

Recommended workflow: Use ChatGPT to generate initial subject line batches, then run top candidates through Claude with your brand guidelines to filter for tone alignment.

Claude vs. ChatGPT for SEO briefs and trustworthy research

Claude vs. ChatGPT for SEO briefs and trustworthy research

So, is Claude better than ChatGPT for generating SEO briefs and conducting accurate research? Honestly, it’s a tough call, but I can say with confidence that both tools require human verification.

Before I get into the details, take a look at the table below for a quick comparison of how each tool performs across common SEO tasks.

Model behavior comparison for SEO tasks

|

SEO Task |

Claude |

ChatGPT |

Best Choice |

|

Content briefs |

Synthesizes multiple source documents, maintains structural consistency across detailed briefs |

Generates briefs quickly, but may lose coherence in complex multi-section documents |

Claude for comprehensive briefs; ChatGPT for simple briefs |

|

Blog outlines |

Produces logically structured outlines with clear hierarchies, handles nuanced topic relationships |

Fast outline generation, strong at generating multiple variations quickly |

Claude for depth; ChatGPT for speed |

|

Keyword clustering |

Groups keywords by semantic relationships, and identifies content gaps across clusters |

Rapid clustering with basic categorization, good for initial groupings |

Tie! ChatGPT is faster; however, Claude is more |

|

Topic cluster planning |

Maps pillar-cluster relationships across large content ecosystems |

Generates cluster ideas quickly; less effective at maintaining cross-cluster coherence |

Claude for complex architectures |

|

Competitor content analysis |

Processes multiple competitor pages simultaneously within the context window |

Requires chunking for large competitive sets; faster for single-page analysis |

Claude for multi-competitor analysis |

|

Search intent classification |

Accurate intent categorization with explanations |

Quick classifications occasionally oversimplify mixed-intent queries |

Claude for accuracy |

Claude vs. ChatGPT for SEO research

Struggling to choose between Claude and ChatGPT for SEO research? I get it. When I’m struggling with decision-making, I segment my approach based on two things:

- My end goal

- The capabilities of the tool I’m using

Moreover, choose Claude when your SEO work involves:

- Briefs requiring synthesis of 5+ source documents

- Topic clusters with 15+ supporting pages to map

- Competitive analysis across multiple URLs

- Content audits requiring consistency checks across large page sets

- Research where factual accuracy directly impacts content quality

And, alternatively, choose ChatGPT when you need:

- Quick keyword brainstorms for new topics

- Multiple outline variations to evaluate

- Rapid title and meta description drafts

- Initial content gap hypotheses before deeper research

- Fast turnaround on simple, single-topic briefs

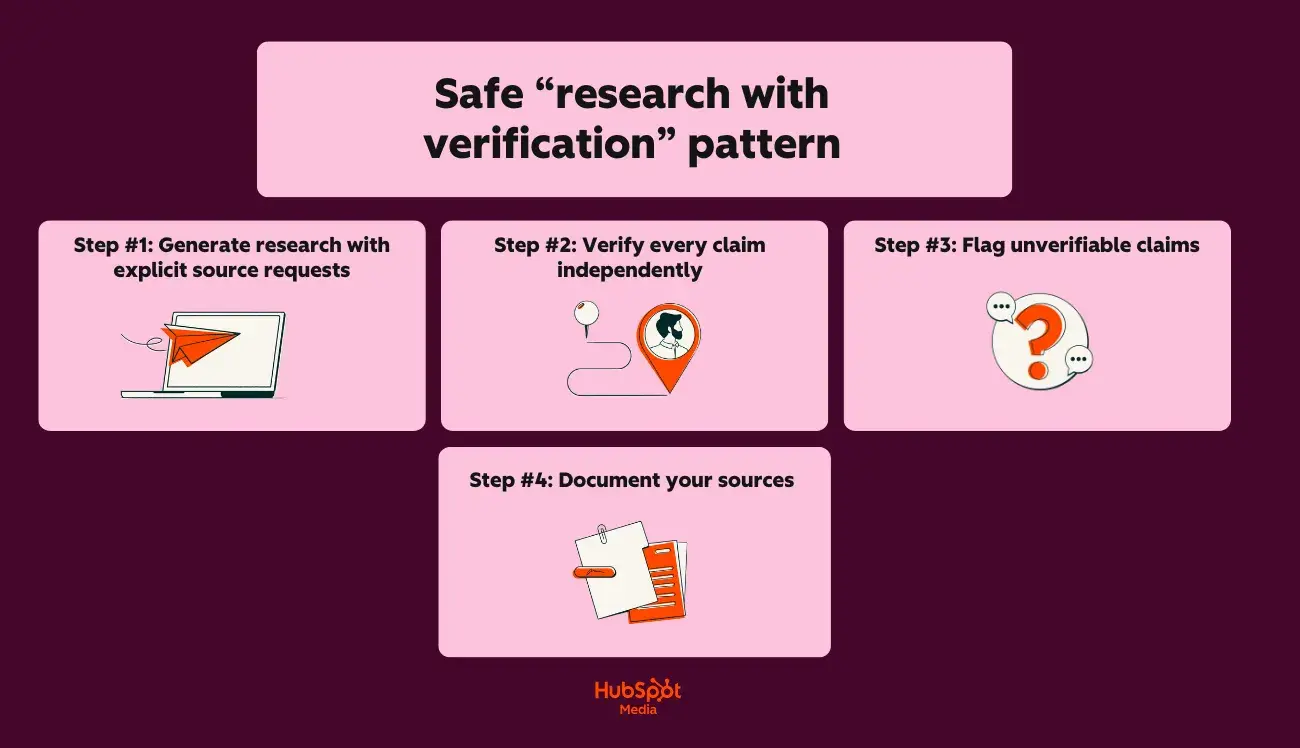

Safe “research with verification” pattern

Neither Claude nor ChatGPT should be trusted as a primary research source. Both can:

- Hallucinate statistics

- Misattribute quotes

- Fabricate sources

Follow this verification pattern for trustworthy research:

Step #1: Generate research with explicit source requests

Start with this prompt:

“Provide 5 statistics about [topic] that I can use in a blog post.

For each statistic, include:

- The specific claim

- The original source (organization, publication, study name)

- The year of publication”

Step #2: Verify every claim independently

Next, do the following:

- Search for the exact statistic in the claimed source

- Confirm the source exists and is credible

- Verify the data matches what the AI provided

- Check publication dates for currency

Step #3: Flag unverifiable claims

If you’re sensing inaccuracy, proceed as follows:

- If you can’t locate the source, don’t use the statistic

- If the source exists but the data differs, use the verified version

- If the AI admitted uncertainty, prioritize verification

Step #4: Document your sources

Lastly, be sure to:

- Maintain a source spreadsheet for each content piece

- Record: claim, source URL, verification date, verification status

- Link directly to primary sources in your content

Hallucination prevention checklist

Use this checklist before publishing any AI-assisted SEO content:

Before prompting:

- Provide the AI with verified source documents when possible

- Request citations for all factual claims in your prompt

- Ask the AI to flag uncertainty: “Note any claims you’re less than 90% confident about”

- Specify: “Do not invent statistics or sources”

Next, during review:

- Verify every statistic against the original source

- Confirm quoted experts actually said what’s attributed to them

- Check that cited studies exist and contain the referenced data

- Validate company names, product names, and proper nouns

- Cross-reference dates, percentages, and numerical claims

Then, before publishing:

- Replace AI-suggested sources with direct links to primary sources

- Remove any claims you couldn’t independently verify

- Add “as of 2026-03-02T12:00:04Z” qualifiers to time-sensitive statistics

- Run content through HubSpot’s AI Search Grader to evaluate optimization and accuracy signals

Lastly, beware of these red flags that indicate potential hallucinations:

- Statistics with suspiciously round numbers (exactly 50%, precisely 1 million)

- Sources you’ve never heard of that sound authoritative

- Quotes that seem too perfectly aligned with your argument

- Data points that contradict your industry knowledge

- Citations to “recent studies” without specific names or dates

Claude vs. ChatGPT for long‑form content and sales enablement

When it comes to LLM usage for long-form content and sales enablement, I’m all for experimentation. But regardless of your approach and what LLM you use to do it, guess what matters the most? How much context does the LLM have to successfully execute your request?

This capacity is defined by the term “concept window,” which means that an LLM like ChatGPT has only a limited amount of space to process and remember information from your conversation.

Take a peek at the comparison table below to see how Claude and ChatGPT stack up:

|

Feature |

Claude |

ChatGPT (GPT-5.2) |

|

Maximum context window |

200K tokens (~150,000 words) |

28K tokens (~96,000 words) |

|

Practical working limit |

~100K tokens for optimal performance |

~64K tokens for optimal performance |

|

Full ebook in a single context |

Yes |

Partial (may require chunking) |

|

Brand guide + draft + instructions |

Easily fits |

Fits with constraints |

So, what does this mean for long-form content? Allow me to elaborate:

- Claude can hold your entire style guide, brand voice document, and a 50-page draft simultaneously without losing context

- ChatGPT requires more careful prompt management for documents exceeding 40-50 pages

In the following section, I’ll delve into a cool feature set that makes producing long-form content with Claude easy. Let’s chat through Claude Projects and Artifacts.

Using Claude Projects and Artifacts for long-form work

So, what are Claude Projects and Artifacts? Here’s the TLDR version:

- Claude Projects allows you to create dedicated workspaces with their own chat histories and knowledge bases

- Claude Artifacts allows you to turn ideas into functional apps, tools, or content

Here’s a closer look at what Claude Projects can do for your long-form work:

- Upload persistent documents (brand guides, style sheets, product documentation) that remain accessible across all conversations within the project

- Create separate projects for different content types: “Ebooks,” “Case Studies,” “Enablement Decks”

- Reference uploaded documents without re-pasting: “Apply our brand voice guide to this draft.”

Additionally, here’s what you can do with Claude Artifacts:

- Generate standalone content pieces (outlines, chapters, complete drafts) that display in a separate panel

- Edit artifacts iteratively without losing conversation context

- Export completed artifacts directly to your CMS or document editor

- Version artifacts within a single conversation for comparison

Now that you have an understanding of ways to optimize long-form content production with Claude, let’s talk chunking strategies in the following section.

Chunking strategies for long-form content

When documents exceed practical context limits or when you need tighter control over output, this is when you’ll need to “chunk” (aka break your content into smaller, manageable segments).

Here’s the best part about chunking: you can take a few different approaches when doing it. Check out some of my favorites:

1. Chapter-by-chapter chunking

Chapter-by-chapter chunking works as follows:

- Generate a complete outline with all chapter summaries first

- Draft each chapter individually, referencing the master outline

- Include “Previously covered:” context at the start of each chapter prompt

- Compile chapters and run a continuity check across the full document

2. Section-based chunking

Section-based chunking (my favorite approach) works a little differently, but I think it’s pretty intuitive once you’ve given it a try. Here’s a table I like to refer to when using section-based chunking:

|

Content Type |

Recommended Chunk Size |

Context to Include |

|

Ebook (10+ chapters) |

1 chapter per prompt |

Outline + previous chapter summary |

|

Guide (5 to 10 sections) |

2 to 3 sections per prompt |

Full outline + adjacent sections |

|

Case study |

Full document (typically fits) |

Template + brand guide |

|

Enablement deck |

5 to 10 slides per prompt |

Deck outline + messaging framework |

3. Overlap technique for continuity

Lastly, here’s an approach I like to use when I want to preserve narrative flow and consistency across chunks:

- Include the last 2 to 3 paragraphs of the previous chunk in each new prompt

- Reference specific transitions: “Continue from where we discussed [topic]”

- Maintain a running summary document that travels with each chunk

Outline strategies by content type

To help you maximize efficiency with Claude, below are step-by-step instructions for creating an outline that’ll ultimately become long-form when fully drafted, segmented by various long-form content types:

For ebooks and comprehensive guides, use this approach:

- Start with a topic brief: audience, goal, key differentiators

- Generate a detailed outline with Claude (leverage full context window)

- Request chapter summaries (2-3 sentences each) before drafting

- Draft the introduction and conclusion first to anchor the tone

- Fill the middle chapters referencing the established bookends

For case studies, try this workflow:

- Upload case study template + raw interview notes/data

- Generate structured outline: Challenge → Solution → Results → Quote

- Draft full case study in a single pass (typically under 3,000 words)

- Claude AI vs ChatGPT for writing case studies favors Claude for maintaining narrative consistency

For lengthy enablement decks, give this method a try:

- Define deck purpose: sales training, product launch, competitive positioning

- Generate a slide-by-slide outline with a speaker notes framework

- Draft content in logical groupings (problem slides, solution slides, proof slides)

- Request variations for different audience segments

Lastly, for content briefs that’ll be shared with external writers, try this:

- Use Claude to generate comprehensive briefs from minimal inputs

- Include: target keywords, audience profile, competitive angles, required sections, tone guidelines

- Claude’s context window holds reference materials (competitor content, source documents) alongside brief requirements

Handoff patterns: Long-form to sales collateral

A big part of working in marketing is knowing that the long-form content you create will end up in the hands of sales folks.

To guarantee seamless handoffs from marketing to sales, follow this simple step-by-step framework below:

|

Step |

Tool (Claude or ChatGPT) |

Output |

|

Complete ebook draft |

Claude |

Full document in Claude Artifacts |

|

Extract key statistics |

Claude |

Bulleted stat list with context |

|

Generate one-pagers |

ChatGPT |

Quick-turn summaries by chapter |

|

Create social proof snippets |

ChatGPT |

Quote cards, testimonial formats |

|

Build slide content |

ChatGPT |

Deck-ready bullet points |

Pro Tip: Export completed assets to Marketing Hub via HubSpot’s Claude connector for staging, approval routing, and team-wide access.

Claude vs. ChatGPT for simple marketing automations and analysis

ChatGPT versus Claude for coding depends on task complexity: ChatGPT for speed on simple scripts, Claude for accuracy on multi-step operations.

But there’s more to AI-assisted automation than you think. Using Claude or ChatGPT for marketing automation and analysis requires the right use cases. To help you get started, I’ve outlined a few for you to start with below:

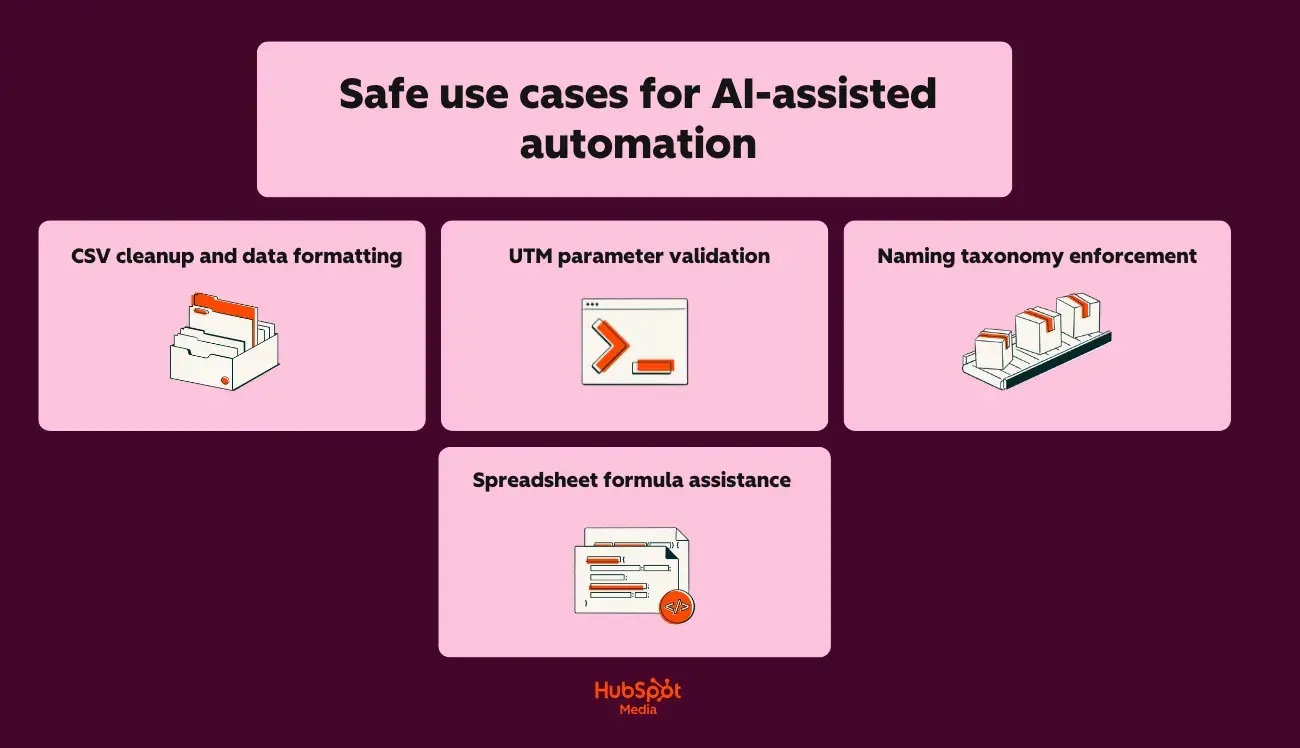

Safe use cases for AI-assisted automation

For CSV cleanup and data formatting, try:

- Standardizing date formats across exported campaign data

- Removing duplicate rows and trimming whitespace

- Converting column headers to consistent naming conventions

- Splitting or combining fields (e.g., separating “City, State” into two columns)

For UTM parameter validation, you should:

- Check URLs for missing or malformed UTM parameters

- Verify utm_source, utm_medium, and utm_campaign match documented taxonomy

- Flag inconsistent capitalization or spacing errors

- Generate corrected URLs for reimport

When working with naming taxonomy enforcement, try the following:

- Validate campaign names against your naming convention rules

- Identify assets that don’t follow folder/file naming standards

- Generate compliant names for new campaigns based on templates

- Audit historical assets for taxonomy drift

Lastly, for spreadsheet formula assistance, try:

- Writing VLOOKUP, INDEX/MATCH, or XLOOKUP formulas

- Creating pivot table configurations

- Building conditional formatting rules

- Debugging formula errors

I recommend using Claude for any AI-assisted automation that requires precision. Now that I’ve given you a few use cases to consider, next, I’ll talk through what you’ll use to keep your outputs safe and reliable.

Guardrail checklist for AI-generated code and analysis

I’ll say this once, maybe I’ll say it again, but regardless, read this statement carefully: Never deploy AI-generated code or act on AI-generated analysis without human review.

Here’s what you should do before running any AI-generated script:

- Read the entire script line by line (don’t assume correctness)

- Verify the script only accesses intended files/data sources

- Check for hardcoded values that should be variables

- Confirm no destructive operations (DELETE, TRUNCATE, overwrite) exist without explicit safeguards

- Test on a sample dataset before running on production data

- Back up the original data before any transformation

- Run in a sandbox environment first when possible

Also, before acting on AI-generated analysis, be sure to:

- Verify source data accuracy before accepting conclusions

- Cross-check calculations manually on a sample subset

- Question surprising findings (spoiler art: AI can misinterpret data structures)

- Confirm the AI understood your column headers and data types correctly

- Check for hallucinated patterns (AI may invent correlations)

- Validate statistical claims with your analytics platform’s native reporting

Claude vs. ChatGPT: Data privacy, governance, and brand protection

When it comes to data privacy, governance, and brand protection comparisons, I’ll be honest with you: both Claude and ChatGPT provide adequate protections (when configured correctly, of course).

But I understand that you want to know about all the bells and whistles when it comes to this stuff, so, for your convenience, within this section, I’ll cover the following for both tools:

- Data handling policies

- Governance frameworks

- Brand protection strategies

Let’s get into it:

Claude vs. ChatGPT: Data privacy comparison

Here’s a quick glimpse of Claude’s and ChatGPT’s data privacy capabilities:

|

Privacy Feature |

Claude |

ChatGPT |

|

Training data exclusion |

Default: user data not used for training |

Requires opt-out in settings or the Enterprise tier |

|

Data retention (consumer tiers) |

30 days for trust and safety |

30 days for abuse monitoring |

|

Data retention (enterprise) |

Configurable, including zero retention |

Configurable, including zero retention |

|

SOC 2 Type II certification |

Yes |

Yes |

|

HIPAA compliance (with BAA) |

Enterprise tier |

Enterprise tier |

|

GDPR compliance |

Yes |

Yes |

|

Data residency options |

Available through the Enterprise tier |

Available through the Enterprise tier |

Claude vs. ChatGPT: Governance capabilities (by tier)

Next, let’s take a glance at Claude’s and ChatGPT’s governance capabilities (by tier):

Claude’s governance features:

- Pro: Conversation history controls, data export

- Team: Admin console, usage analytics, workspace organization, SSO (SAML)

- Enterprise: Audit logs, custom data retention, VPC deployment options, dedicated support

ChatGPT’s governance features:

- Plus: Conversation history toggle, data export

- Team: Admin console, workspace management, SSO (SAML), usage caps per user

- Enterprise: Audit logs, custom data retention, Azure-based deployment, admin analytics dashboard

Brand protection strategies

When it comes to using LLMs, regardless of which one, one thing rings true: you have to train it how to represent your brand.

Below, I’ve provided some starter tips for establishing a firm brand protection foundation:

But first, here’s a short ‘n’ sweet checklist for reventing brand voice drift:

- Upload comprehensive brand guidelines to Claude Projects or ChatGPT Custom GPTs

- Include approved terminology lists, banned phrases, and tone examples

Here’s what to do to prevent data leakage:

- Never paste customer PII directly into prompts

- Use placeholder tokens (Customer_A, Company_B) and replace after generation

Here’s my advice for preventing unauthorized content publication:

- Route all AI-generated content through approval workflows before publishing

- Tag AI-assisted content in your CMS for audit purposes

- Marketing teams achieve best results by using Claude for editing and ChatGPT for drafting (final human review remains mandatory!)

Pro Tip: Use HubSpot’s Data Hub to control which fields sync to external tools

Claude vs. ChatGPT: Governance starter checklist for marketing teams

Now that we’ve covered the basics, use these other checklists to establish baseline AI governance before scaling usage:

For successful policy documentation, do the following:

- Create an AI acceptable use policy defining approved tools and use cases

- Document which content types require AI disclosure (internal versus external)

- Establish data classification rules (what can/cannot be shared with AI tools)

- Define approval authority for AI-generated customer-facing content

For implementing technical controls, try this out:

- Enable SSO for all AI tools (Team tier minimum)

- Configure data retention settings appropriate to your industry

- Disable training data sharing on ChatGPT (Settings → Data Controls)

- Set up workspace organization by team or function

- Connect Claude vs ChatGPT integrations through your CMS for centralized content staging

For effective access management protocols, it might be helpful to:

- Assign individual seats to users requiring audit trails

- Create shared accounts only for non-sensitive, internal use cases

- Review and revoke access quarterly

- Document API key ownership and rotation schedule

For effective quality control measures, do this:

- Establish mandatory human review before publication

- Create brand voice verification prompts for both tools

- Build feedback loops to flag AI outputs that miss brand standards

- Track error rates by tool to optimize Claude versus ChatGPT for marketing allocation

Lastly, for assured compliance alignment, do this:

- Confirm AI tool usage aligns with existing data processing agreements

- Update privacy policies if AI assists with customer communications

- Review industry-specific regulations (HIPAA, FINRA, GDPR) for AI implications

- Document AI governance decisions for audit readiness

Next, let’s chat through the decision that comes before data privacy stuff: pricing.

Claude vs. ChatGPT: Pricing and subscription levels

When it comes to Claude’s and ChatGPT’s pricing/subscription levels, here’s what you need to know:

- Claude versus ChatGPT pricing follows similar structures at consumer tiers (but diverges significantly at team and enterprise levels).

- Understanding where costs accumulate helps marketing teams budget accurately and avoid unexpected overages.

- API usage often becomes the hidden budget item that catches teams off guard.

And you likely already guessed this, but there’s more to the story when it comes to evaluating which LLM tool could be a fit for your team.

Lucky for you, I’ll deep-dive into pricing, where costs add up, and, most importantly, will provide recommendations based on your team’s needs below.

Claude vs. ChatGPT: Subscription tier comparison (quick glance)

|

Tier |

Claude |

ChatGPT |

Key Differences |

|

Free |

Claude.ai (limited messages) |

ChatGPT Free (GPT-5 limited) |

ChatGPT offers more free messages; Claude provides full model access with lower limits |

|

Pro/Plus |

$17/month |

$20/month |

Identical pricing; Claude offers higher usage limits, ChatGPT includes DALL·E and advanced voice |

|

Team |

$20/user/month (billed annually) or $25/user/month (billed monthly) |

$25/user/month (billed annually) |

Both require minimum seats; however, Claude offers stronger privacy and governance controls for enterprise teams |

|

Enterprise |

Custom pricing (see here) |

Custom pricing (see here) |

Both require annual contracts; Claude emphasizes security, ChatGPT emphasizes plugin ecosystem |

|

API |

Pay-per-token |

Pay-per-token |

Pricing varies by model |

Claude vs. ChatGPT: Where costs add up

In the previous section, I briefly overviewed the difference between Claude’s and ChatGPT’s pricing tiers. Next, I’ll outline how and where costs add up.

When investing in any software tool, it’s important to know where the hidden costs live. In this case, it’s rate limits and usage caps.

Below, I’ve outlined what the limitations could look like for Claude Pro and ChatGPT Plus, as well as Team tiers for either subscription:

- Claude Pro: Higher message limits than free tier, but heavy users (50+ long conversations daily) may hit caps

- ChatGPT Plus: Includes GPT-4o with usage limits

- Team tiers: Higher limits per user, but still capped

Another cost factor to consider is API usage. Take a glimpse at how much token consumption could cost you for both tools:

|

Model |

Input Cost (per 1M tokens) |

Output Cost (per 1M tokens) |

|

Claude Sonnet 4.5 |

$3 / MTok |

$15 / MTok |

|

Claude Sonnet 4 |

$3 / MTok |

$15 / MTok |

|

GPT-5.2 |

$1.750 / 1M tokens |

$14.000 / 1M tokens |

|

GPT-5.2 pro |

$21.00 / 1M tokens |

$168.00 / 1M tokens |

Of course, which model you choose and how many tokens you need are dependent upon how many seats you’ll be purchasing.

In the next section, I’ll chat through when to get individual seats versus opting for shared access.

Planning seats vs. shared access

Deciding between individual seats and shared access can make or break your AI budget..

Here are a few indicators of when to assign individual seats:

- Team members need conversation history and saved prompts

- Audit trails are required for compliance

- Usage monitoring by individual contributors is necessary

- Claude vs ChatGPT integrations require user-level permissions in your CMS

Oppositely, here are a few indicators of when to provide shared access:

- Occasional users (fewer than 10 tasks/week)

- API-driven workflows where individual accounts aren’t needed

- Teams are testing before committing to a full rollout

So, which subscription do you need?

Still don’t know which subscription tier would be the best investment? No fear. To assist you in your decision-making, I’ve broken down recommendations based on:

- Content volume

- Number of users

- Approval needs

Take a gander:

1. Recommended approach based on content volume

|

Monthly Content Output |

Recommended Approach (by tier) |

|

Under 20 pieces |

Free tier |

|

20 to 50 pieces |

Pro/Plus tier |

|

50 to 150 pieces |

Team tier |

2. Recommended approach based on the number of users

|

Team Size |

Recommended Approach (by tier/subscription level) |

|

1 user |

ChatGPT Plus or Claude Pro |

|

2 to 4 users |

Mix of Pro subscriptions by role |

|

5 to 10 users |

Mix of Pro subscriptions by role |

|

11 to 25 users |

Team tier |

|

25+ users |

Enterprise evaluation recommended |

3. Recommended approach based on approval needs

|

Requirement |

Recommended Approach (by tier/subscription level) |

|

No formal approval process |

Pro/Plus tiers are sufficient |

|

Manager review before publishing |

Team tier with workspace organization |

|

Legal/compliance review required |

Claude Team or Enterprise (in my opinion, Claude offers stronger privacy and governance controls for enterprise teams) |

|

SOC 2/HIPAA compliance |

Enterprise tier with BAA (both Claude and ChatGPT offer) |

|

Audit trail mandatory |

Enterprise tier with BAA (both Claude and ChatGPT offer) |

All-in-all? Claude versus ChatGPT for marketing budget decisions ultimately depends on your primary use case.

Now that I’ve covered the financial considerations, let’s get into the practical application: when to use Claude, ChatGPT, or both in one stack.

When to use Claude, ChatGPT, or both in one stack

Claude and ChatGPT are both great; I know it’s a difficult decision to choose one LLM over the other. However, choosing just one isn’t always necessary.

To determine whether to adopt one tool, the other, or both, use the decision matrix below:

|

Use Case |

Recommended Tool |

Why |

|

Blog posts and long-form content |

Claude |

Claude is great at producing long-form content editing and handling complex contexts |

|

Email sequences and newsletters |

Both |

ChatGPT for volume, Claude for personalization logic |

|

Social media content |

ChatGPT |

ChatGPT is best for rapid ideation, email copy, and social content |

|

SEO briefs and research synthesis |

Claude |

Processes competitor data and source documents in a single context window |

|

Ad copy and landing pages |

ChatGPT |

Faster iteration on short-form variants and hooks |

|

Brand voice enforcement |

Claude |

Better tone consistency across extended content |

|

Marketing automation scripts |

Both |

ChatGPT for speed, Claude for accuracy |

|

Compliance-sensitive content |

Claude |

Claude offers stronger privacy and governance controls for enterprise teams |

|

Visual content ideation |

ChatGPT |

ChatGPT supports multimodal content generation, including images and code |

|

Customer-facing chatbots |

Both |

ChatGPT for speed, Claude for nuanced responses |

Still unsure of which tool is best for your team? To help you make a confident choice, here’s a quick-reference guide based on role:

1. SMB Marketer

Is Claude better than ChatGPT for a solo marketer? Not necessarily. Speed and cost efficiency matter most at this stage.

- Recommended stack: ChatGPT Plus ($20/month)

- Primary use cases: Social content batching, email drafts, ad copy variants, blog outlines

- When to add Claude: If producing long-form content (whitepapers, ebooks) or working in regulated industries

- Claude versus ChatGPT pricing consideration: Single subscription keeps costs manageable; ChatGPT’s broader feature set (images, plugins) provides more value for generalists

- HubSpot integration: Connect ChatGPT to Marketing Hub for draft generation; use Breeze AI for additional content assistance

2. Mid-Market Teams

Both Claude and ChatGPT can be integrated with CRM, MAP, and CMS platforms via API or third-party connectors. Mid-market teams benefit from using both.

- Recommended stack: ChatGPT Team + Claude Pro ($20-25/user/month combined)

- Workflow structure:

- Content strategists use Claude for briefs and research synthesis

- Writers use ChatGPT for first drafts

- Editors use Claude for brand voice refinement

- Social managers use ChatGPT for post-batching

- Claude versus ChatGPT for marketing allocation: 60% ChatGPT (volume tasks), 40% Claude (quality tasks)

- HubSpot integration: Native Claude connector for editing workflows; ChatGPT via Zapier for automation triggers

3. Enterprise Teams

Claude offers stronger privacy and governance controls for enterprise teams. Compliance-heavy organizations should lead with Claude.

- Recommended stack: Claude Enterprise + ChatGPT Enterprise

- Governance configuration:

- Claude handles all customer-facing content, regulated materials, and data-informed personalization

- ChatGPT handles internal ideation, creative brainstorming, and non-regulated content

- All outputs route through Marketing Hub approval workflows before publication

- Security requirements: SSO integration, audit logging, data retention controls, PII exclusion protocols

- Claude vs ChatGPT integrations: API-level integration with middleware transformation layer; no direct PII exposure to either model

- HubSpot integration: Both connectors active; content staging in Marketing Hub with role-based approval gates

4. Agency (multiple clients, varied brand requirements)

HubSpot enables seamless integration of Claude and ChatGPT into marketing workflows. Agencies need both tools to serve diverse client needs.

- Recommended stack: ChatGPT Team + Claude Team (scale seats to team size)

- Client allocation model:

- High-volume, speed-priority clients → ChatGPT-dominant workflow

- Brand-sensitive, premium clients → Claude-dominant workflow

- Compliance-heavy clients (finance, healthcare, legal) → Claude only

- Social media retainers: ChatGPT for batching, light Claude review

- Blog content: ChatGPT drafts, Claude edits

- Whitepapers and reports: Claude end-to-end

- Email campaigns: ChatGPT for variants, Claude for sequence logic

- HubSpot integration: Separate HubSpot’s Marketing Hub portals per client; configure Claude connector and ChatGPT automation per client brand requirements

How to integrate Claude and ChatGPT with your stack and HubSpot

This section provides step-by-step instructions for each integration, starting with the following table that breaks down your options at a glance:

|

Method |

Technical Skill Required |

Best For |

Setup Time |

|

Native HubSpot Claude connector |

Low |

Teams already using Marketing Hub |

15 to 30 minutes |

|

Zapier/Make middleware |

Low-Medium |

No-code automation between tools |

1 to 2 hours |

|

Direct API integration |

High |

Custom workflows, high-volume operations |

4 to 8 hours |

|

Custom GPTs with HubSpot actions |

Medium |

ChatGPT-centric teams |

2 to 3 hours |

Alright. I’ve given you a bird’s-eye view of each integration method. Next, let’s dive into the nitty-gritty with a step-by-step walkthrough. Take a look at how to integrate Claude and ChatGPT with your tech stack and HubSpot:

How to set up the native Claude connector with HubSpot

Firstly, HubSpot’s Claude connector provides the fastest path to integration.

Here’s how you’ll connect Claude to HubSpot’s Marketing Hub:

Source

[alt text] a screenshot of hubspot’s claude connector

- Navigate to Settings → Integrations → Connected Apps in your HubSpot portal.

- Search for “Claude” in the App Marketplace.

- Click “Connect app” and authenticate with your Anthropic account credentials.

- Select which HubSpot objects Claude can access (i.e., contacts, companies, deals, and content).

- Configure data permissions based on your team’s privacy requirements.

- Test the connection by running a sample content task.

Once you’ve successfully connected Claude to Marketing Hub, here’s what it will do:

- Pull CRM data into Claude prompts for personalized content generation

- Push Claude-generated content directly to Marketing Hub drafts

- Trigger Claude workflows based on HubSpot events (new lead, deal stage change)

- Maintain audit logs of all AI-assisted content creation

How to set up the native ChatGPT connector with HubSpot

Similar to HubSpot’s Claude Connector, HubSpot’s native ChatGPT integration connects these capabilities directly to your marketing workflows without middleware.

Here’s how you’ll connect ChatGPT to Marketing Hub:

Source

- Navigate to Settings → Integrations → Connected Apps in your HubSpot portal.

- Search for “ChatGPT” in the App Marketplace.

- Click “Connect app” and authenticate with your OpenAI account credentials.

- Select which HubSpot objects ChatGPT can access (contacts, companies, deals, content).

- Configure data permissions based on your team’s privacy requirements.

- Test the connection by running a sample content generation task.

Once the connector is enabled, here’s what you’ll be able to do:

- Generate email drafts, social posts, and ad copy directly within Marketing Hub

- Pull CRM context into ChatGPT prompts for personalized messaging

- Create A/B test variants for email subject lines and CTAs

- Access ChatGPT’s multimodal capabilities for content ideation alongside text generation

Now that you know how to integrate both tools with HubSpot, let’s address some of the most common questions marketers have about Claude versus ChatGPT.

Frequently asked questions (FAQ) about Claude vs ChatGPT for marketing

Can I use both Claude and ChatGPT in the same marketing workflow?

Yes. Marketing teams achieve best results by using Claude for editing and ChatGPT for drafting. It’s a symbiotic relationship, if you will.

For more clarity, here’s a chart that breaks down how to chain tasks effectively with both LLM platforms:

|

Stage |

Tool |

Task |

|

Ideation |

ChatGPT |

Generate topic lists, outline variations, and hook concepts |

|

First draft |

ChatGPT |

Produce initial copy at speed |

|

Structural edit |

Claude |

Reorganize flow, eliminate redundancy, strengthen arguments |

|

Brand voice polish |

Claude |

Apply tone guidelines across the full document |

|

Format adaptation |

ChatGPT |

Convert approved copy into social posts, email variants, and ad copy |

I’ll acknowledge that integrating either of these LLMs with a CRM/CMS system can be daunting. So, to make it easier, here are a few best practices for keeping them in sync:

- Use Zapier or Make to trigger workflows between tools. Example: New draft in Google Docs → Claude API for editing → HubSpot CMS for staging.

- Store all finalized content in your CMS as the single source of truth—never in AI chat histories.

- Tag AI-assisted content in your CMS with metadata (tool used, draft version, approval status) for audit trails.

Pro Tip: HubSpot enables seamless integration of Claude and ChatGPT into marketing workflows through Marketing Hub’s native connectors and workflow automation.

Which is better for fact‑checked SEO content?

As I’ve already highlighted above, Claude will be your go-to for long-form content, making it stronger for research synthesis and citation accuracy. ChatGPT is best for rapid ideation, email copy, and social content where speed outweighs verification depth.

Assuming that you’ll be using Claude, here’s a practical verification workflow that you can use to ensure accuracy:

- Research phase: Use Claude with web search enabled to gather sources. Claude provides citations and flags uncertainty.

- Draft phase: Generate content in either tool based on speed needs.

- Fact-check phase: Paste draft into Claude with the prompt: “Identify every factual claim in this content. For each claim, state whether it’s verifiable, provide a source if possible, and flag any statements that require human verification.”

- Source audit: Manually cross-reference Claude’s flagged claims against primary sources.

- Final review: Run completed content through Claude to confirm no new unsupported claims were introduced during editing.

However, if you’re still on the fence about which LLM does heavy-SEO-content-lifting the best, then consider this:

- Favor Claude for statistics, quotes, historical facts, and technical specifications. Claude’s training emphasizes accuracy over confidence.

- Favor ChatGPT for general knowledge framing, introductions, and transitional content where factual precision matters less.

How do I keep AI outputs on‑brand across channels?

In my opinion, a consistent brand voice requires a documented system, not ad-hoc prompting.

That said, here’s a brand voice system setup you’ll use to keep AI outputs – whether they be for blogs, emails, or social posts – consistent across channels:

Create a brand voice document containing:

- 5 to 7 tone descriptors with examples (e.g., “Confident but not arrogant: Say ‘We recommend’ not ‘You should’”)

- Approved and banned word lists

- Sentence length and structure preferences

- Channel-specific variations (LinkedIn = more formal; Instagram = more conversational)

Next, configure each tool:

- Claude: Upload the full brand document to a Project. Claude retains it across all conversations within that project.

- ChatGPT: Build a custom GPT with brand rules embedded in the system prompt. Include 3-5 example paragraphs showing ideal tone.

Once you’ve implemented and used the brand voice system template above, next, you’ll review the loop with specific prompts.

Below, I’ve outlined the order in which you’ll run your checks and which tools, as well as prompts, to use:

- Pre-publication check (Claude): “Review this content against our brand voice document. List any phrases that violate our tone guidelines and suggest replacements.”

- Batch audit (ChatGPT): “Score these 10 social posts from 1-5 on brand voice consistency. Flag any scoring below 4 with specific issues.”

- Cross-channel adaptation (Claude): “Rewrite this blog excerpt for LinkedIn, Instagram, and email. Maintain core message but adjust tone per our channel-specific guidelines.”

Lastly, here are some quick tips regarding CMS/CX controls that might be helpful as you utilize these tools:

- Store approved AI prompts as templates in Marketing Hub for team-wide access.

- Require approval workflows for AI-generated content before publication.

- Use content staging to compare AI drafts against previously approved pieces.

What’s the safest way to connect AI models to my CRM data?

The short answer? Safe CRM integration requires architectural discipline regardless of the tool. Never pass raw PII directly to AI models.

|

Method |

Security Level |

Best For |

|

API with a data transformation layer |

Highest |

Enterprise teams with developer resources |

|

MCP (Model Context Protocol) servers |

High |

Structured integrations with defined schemas |

|

Custom actions via middleware (Zapier/Make) |

Medium |

Teams without dedicated developers |

|

Direct copy-paste |

Low |

Ad-hoc tasks only; never for PII |

Not super clear on how to separate PII from prompts? Here’s some guidance (in plain English, of course):

- Build a transformation layer that replaces PII with tokens before sending to AI. (Here’s an example: “John Smith, john@company.com” becomes “Customer_A, email_A.”)

- Process AI outputs through reverse transformation to reinsert actual data.

- Never include names, emails, phone numbers, addresses, or account numbers in prompts.

- Use aggregated or anonymized data for analysis tasks. (For example, prompt with “Analyze engagement patterns for enterprise segment,” not “Analyze John Smith’s email history.”)

Lastly, because it never hurts to be extra cautious, here are a few extra tips on using first-party data safely:

- Behavioral data (pages viewed, content downloaded, email engagement) can inform personalization prompts without exposing identity.

- Segment descriptions are safe: “Software buyer, 50-200 employees, evaluated competitor X.”

- Purchase history summaries work: “Customer for 2 years, purchased products A and B, average order $5,000.”

How do I measure AI impact without over‑attributing?

Here’s the thing: AI accelerates production, but doesn’t guarantee outcomes. Measure efficiency gains separately from performance improvements to avoid false attribution.

That said, here are a few efficiency metrics that are directly attributable to AI:

- Time from brief to first draft (hours saved)

- Content volume produced per week/month

- Revision cycles before approval

- Cost per content piece (tool subscription ÷ output volume)

Now, if you’re using AI for marketing-related tasks, there are other metrics to track as well. Below, I’ve also outlined outcome metrics (just to clarify, these metrics are influenced by AI, not caused by it):

- Click-through rates on AI-assisted versus human-only content

- Conversion rates by content type

- SQLs generated from AI-assisted campaigns

- Engagement rates (time on page, scroll depth, shares)

To help you stay organized, I’ve created a simple, easy-to-use campaign reporting framework. It should

- Tag content by production method in your CMS: “AI-drafted,” “AI-edited,” “Human-only.”

- Run parallel tests when possible. Same campaign, same audience segment, different production methods.

- Track leading indicators first. Speed and volume improvements are immediately apparent. CTR and conversion changes take 30-90 days to reach statistical significance.

- Isolate variables. AI-assisted content may perform differently because of topic selection, not AI quality. Compare like-for-like content types.

Reporting cadence:

- Weekly: Efficiency metrics (volume, speed, cost)

- Monthly: Engagement metrics (CTR, time on page)

- Quarterly: Outcome metrics (conversions, SQLs, revenue influence)

Claude vs. ChatGPT: Who’s the real winner?

Despite my personal opinions about which LLM I prefer, when it comes to marketing teams more broadly, here’s my honest take: there isn’t one.

After comprehensively walking you through pricing tiers, integration methods, use cases, and governance considerations, my answer remains the same as it was at the start – the best tool depends on the task at hand.

Claude excels at long-form content editing and handling complex context, making it your go-to for:

- Blog posts

- Whitepapers

- Brand voice enforcement

- Compliance-sensitive content

On the flip side, ChatGPT is best for:

- Rapid ideation

- Email copy

- Social content

But, honestly, here’s what I hope you take away from this guide: Claude versus ChatGPT for marketing isn’t a competition. It’s a collaboration. So, who’s the real winner? The marketing team that learns when to strategically deploy each tool.

Whether you’re drafting email sequences, building SEO briefs, creating enablement decks, or scaling social content, you now have the frameworks, checklists, and decision matrices to make confident choices.

Ready to put your AI-assisted content to work? Get started with HubSpot’s Marketing Hub to integrate Claude and ChatGPT into your workflows, automate approvals, and measure the impact of every piece of content you create — all from one platform.